If you’re looking for a cheap but capable laptop, our experts have browsed Dell’s Presidents’ Day sale and rounded up the best deals on our favorite laptops, monitors, and desktops.

Go to Source

Better than flowers – here are my favorite Lego sets from $12.99 that you can build together

Lego sets you can build together!

Go to Source

World’s largest monitor company designed a 4K 48-inch OLED dual-sided smart gaming display with metal feet, a magnetic power socket, and an Alienware-inspired sound system – but who will actually use it?

GP TV introduces dual-sided 4K OLED display combining gaming design, immersive audio, safety features, and uncertain real-world practicality.

Go to Source

Eureka Ergonomic Axion office chair review: an attractive mid-range throne with great ergonomic features

The Axion office chair from Eureka is is a great do-it-all option, suitable for executives and gamers alike

Go to Source

27 beautiful beige-inspired home office finds for creating your calming, neutral workspace

From champagne beige to stone oat, this is the office furniture, desk accessories, and essential gadgets and decor to create the right vibe.

Go to Source

Intel’s tough decision boosted AMD to record highs

Summary created by Smart Answers AI

In summary:

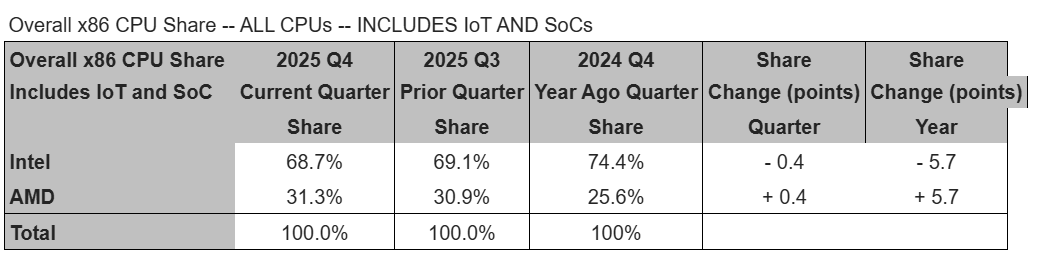

- PCWorld reports that Intel’s supply constraints and strategic focus on higher-margin server processors in Q4 2025 created an opportunity for AMD to capture record market share.

- AMD benefited significantly from Intel’s capacity reallocation, gaining ground in both mobile and desktop processors as the x86 market shifted from 80-20 to 70-30 Intel-AMD ratio.

- While both companies saw server CPU growth, Intel’s mobile client shipments suffered most, allowing AMD to achieve unprecedented consumer market penetration.

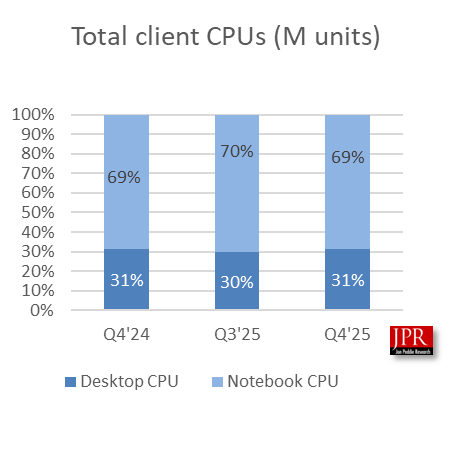

Shipments of x86 processors dropped from the third to the fourth quarter of 2025, as Intel’s supply constraints reined in PC processor shipments and helped AMD, especially in the mobile market.

AMD itself released some of the shipment estimates from Mercury Research on Wednesday, but the research firm added additional details on Thursday, including Intel’s market share. It also confirmed that AMD has now hit record share in both mobile and desktop processors.

Normally, the fourth quarter of the year represents the highest sales, as Black Friday and the winter holidays contribute to consumers snapping up bargains on desktop CPUs and desktop PCs and laptops. In this case, however, Intel made the conscious choice to limit consumer CPU sales and favor servers, after poor process yields and shortages forced a tough decision to push higher-margin server parts. The slowdown also included fewer sales of AMD SOCs into consoles, which are heading into their seventh straight year without a refresh, though that could come in 2027, AMD chief executive Lisa Su said.

Typically, Intel had held on to a 80-20 ratio in the PCU space, but that’s repeatedly narrowed as AMD’s share has increased. Now, it’s more like 70-30.

Mercury Research

Excluding those SOCs, “AMD’s shipments significantly outgrew Intel’s, both sequentially and on year, resulting in strong share increases by both measures,” Mercury Research president Dean McCarron wrote in a note to reporters. “AMD saw far stronger than median seasonal growth growth across all segments in the quarter (except SoC gaming products not included in this calculation.) In contrast Intel’s desktop and mobile shipments were weaker than seasonal due to supply constraints, and while Intel’s server CPU growth slightly offset the downturn in client it was not enough to impact overall share change.”

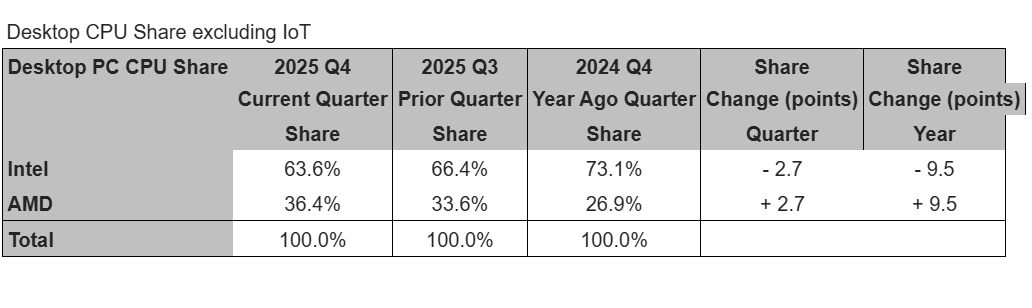

In desktops, AMD grew across all of its product lines, and not just in high-end processors like in previous quarters. Growth favored mid-range products instead. Combine that with Intel’s decision to de-prioritize desktop products, and AMD again hit a record high in desktop CPU shipments.

Mercury Research

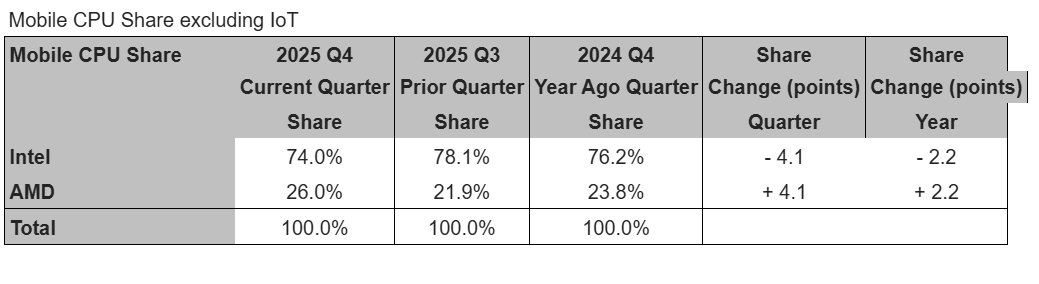

But AMD also hit a record high in its share of mobile processors.

“Intel’s capacity reallocation hit the company’s mobile client CPU shipments the hardest, resulting in Intel experiencing significant sequential and on-year declines in shipments, far below seasonal norms in what is typically an up quarter,” McCarron wrote. “In contrast, mobile client CPUs was AMD’s strongest segment in the quarter. This resulted in a large increase in AMD’s share of the mobile CPU market, which set a new record high in the quarter.”

Mercury Research

The wild card, as always, remains Arm.

“There’s a larger than typical uncertainty in our ARM estimates this quarter as strong PC sell-out made determining CPU sell-in more difficult than usual,” McCarron wrote. “However, due to Apple’s declines in the segment we’re reasonably sure overall ARM client shipments declined in the quarter, but won’t be surprised if we need to revise the figures in the next edition due to the uncertainty.”

Mercury’s McCarron estimated that Arm share in the PC space, including Apple Macs and Arm Chromebooks, should be about 13.3 percent. That’s slightly less than the 13.7 percent that segment saw a year ago, he said.

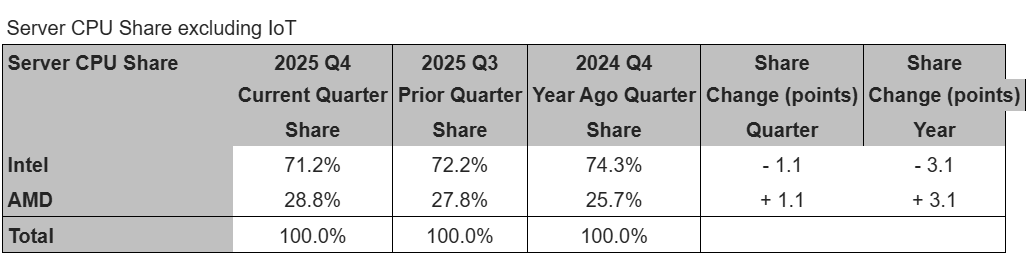

Intel’s renewed focus in the server market, however, and AMD’s emphasis there also brought with it significant growth in server shipments. Intel’s shipments here grew by double the seasonal average, McCarron wrote, with AMD tripling its average here as well.

Mercury Research

Jon Peddie Research, a competing organization, reported slightly different numbers; it found that the global client CPU market grew 2.7 percent sequentially, while server CPU shipments increased 14.1 percent, year over year.

“We think the PC CPUs’ growth was in line with seasonal buying behavior, albeit a bit low,” Jon Peddie, president of JPR, said in a note. “The influence of the up-again, down-again tariffs, and Microsoft’s withdrawal from support of the 2016 Windows 10, also had an effect. We expect Q1’26 to be down due to memory constraints and higher process.”

Jon Peddie Research

‘Your data is public’: Hacker warns victims after leaking 6.8 billion emails online

Someone posted 150GB of emails to the dark web, claiming to hold 6.8 billion unique email addresses.

Go to Source